Spill The Course – Designing a Trust-First Review Platform for Online Courses

Helping learners avoid wasted time and money by transforming scattered opinions into trustworthy, structured reviews.

Click to jump to sections

Role

Founder, UX Designer, Product Strategist End-to-end ownership from discovery to design, development collaboration, and QA.

Timeframe

8 months (idea to launch)

Platform Status

Live, actively being populated with courses and reviews

Project Overview

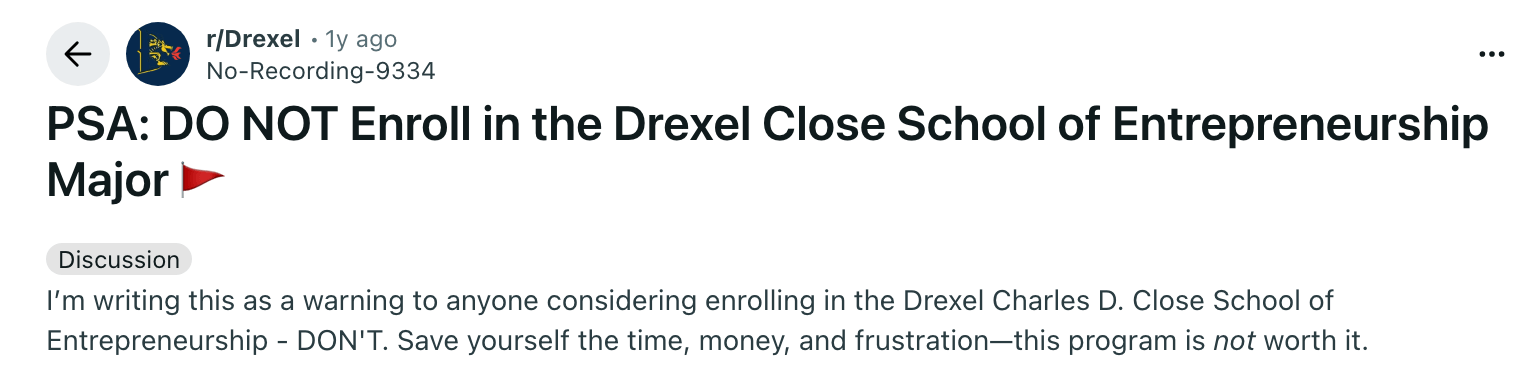

Online courses, especially those sold by YouTube creators and independent instructors, are expensive and difficult to evaluate. Reviews on creator websites are often overly positive or controlled by the seller, students often complain in reddit they wasted thousands of money in overhyped courses, while marketplace platforms like Udemy rely on long, generic paragraph reviews that fail to answer decision-critical questions. As a result, learners are forced to spend hours across Reddit and forums trying to piece together trustworthy insights.

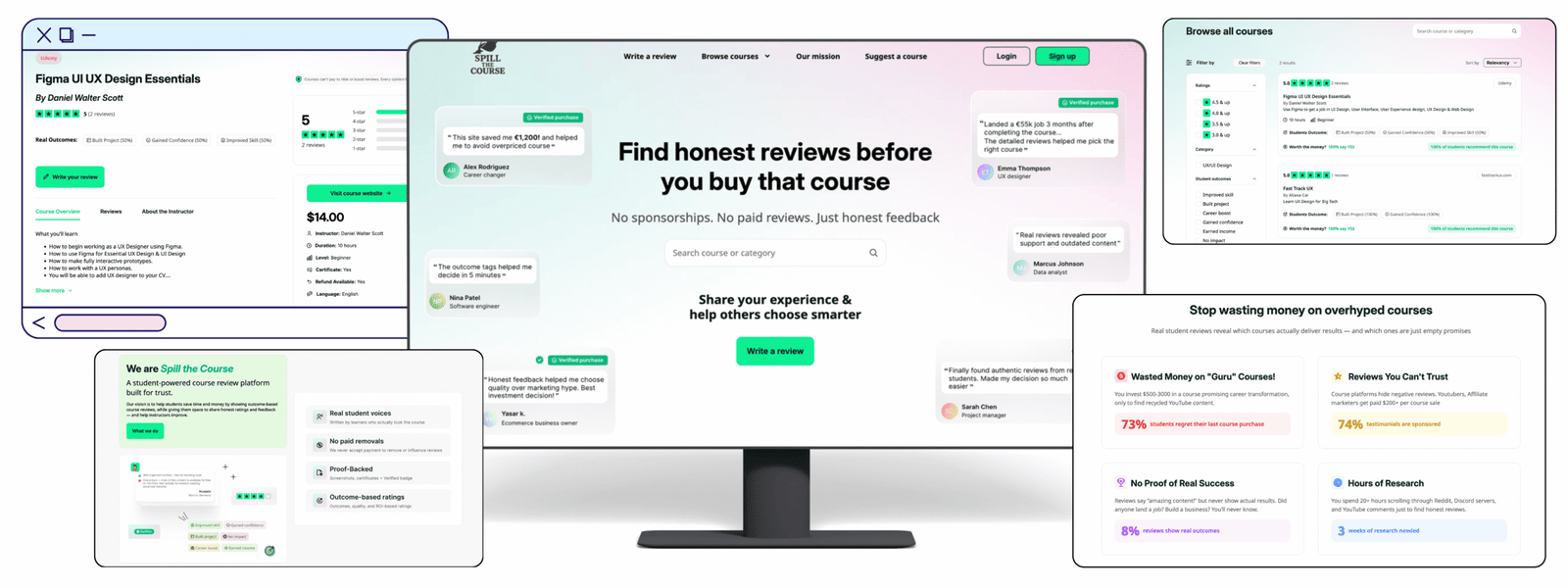

Spill the Course is a dedicated review platform that replaces scattered and generic opinions with structured, trust-focused reviews. By guiding reviewers through specific questions and surfacing real outcomes, the platform helps learners make confident decisions faster and avoid wasting time and money on overhyped courses.

Problem

Learners cannot quickly and confidently evaluate whether an online course is worth their time and money because reviews are scattered, biased, or unstructured.

- There is no independent, centralized platform to see reviews of YouTube, influencer-led courses and independent platforms so a lot of them end up waisting thousands of money.

- Many users reported wasting hundreds or thousands of dollars on overhyped courses due to lack of trustworthy information.

- Reviews on creator websites are overwhelmingly positive and lack credibility.

- Marketplace reviews (e.g. Udemy) are long, generic, and unstructured. so Students must read everything to understand results.

- Learners regularly search “X course scam or legit”

- Reddit and Quora are useful but scattered and researching them is time-consuming.

Solution

Designed a dedicated review platform that prioritizes trust, structure, and decision-critical insights over volume and marketing hype.

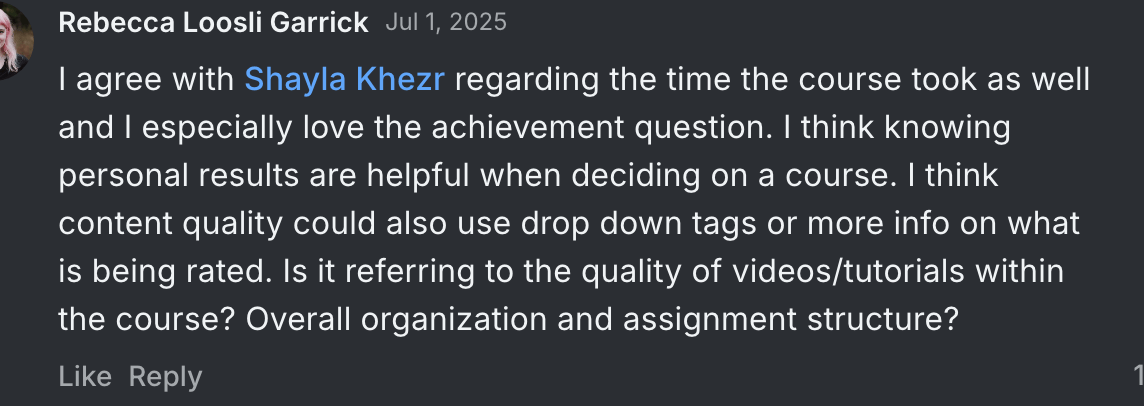

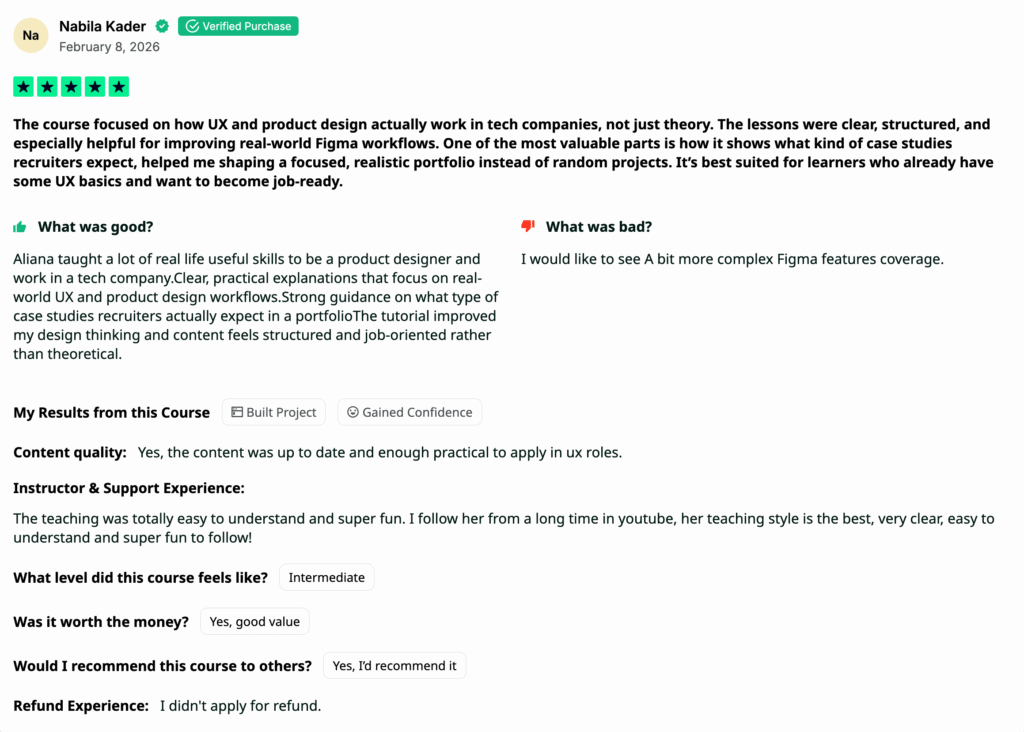

1. Structured Reviews Instead of Generic Comments

Instead of one open text box, I introduced a guided review format:

- Overall rating

- What was good

- What could be improved

- Course level (student’s view)

- Outcome tags

- Worth the money?

- Would you recommend it?

- This turned opinions into structured insights and makes comparisons easier.

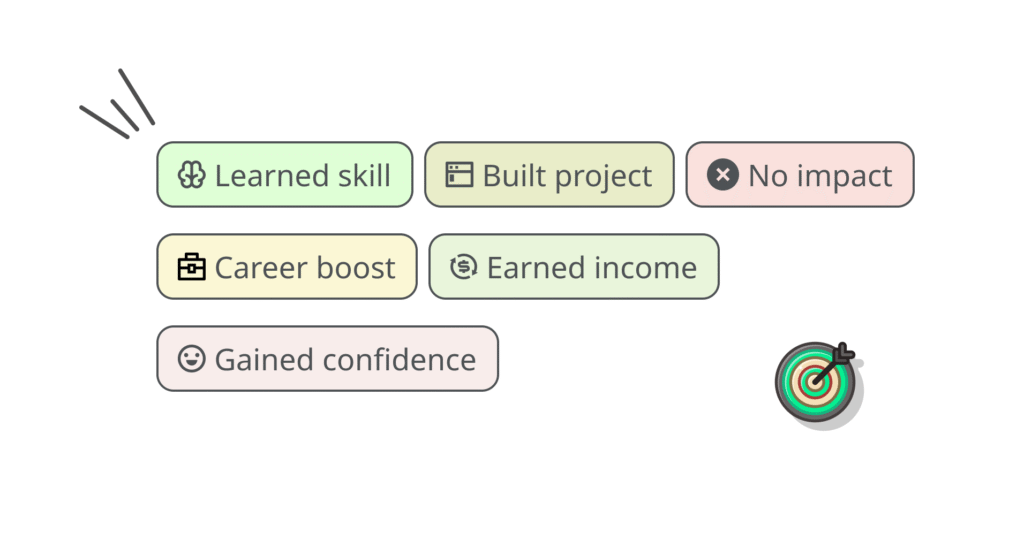

2. Outcome-Based Decision Signals

I introduced standardized outcome tags such as:

- Improved Skill

- Built Project

- Career Boost

- Earned Income

- Gained Confidence

- No Impact

- Course cards show key results and recommendation rates, so users can decide in seconds without reading long reviews.

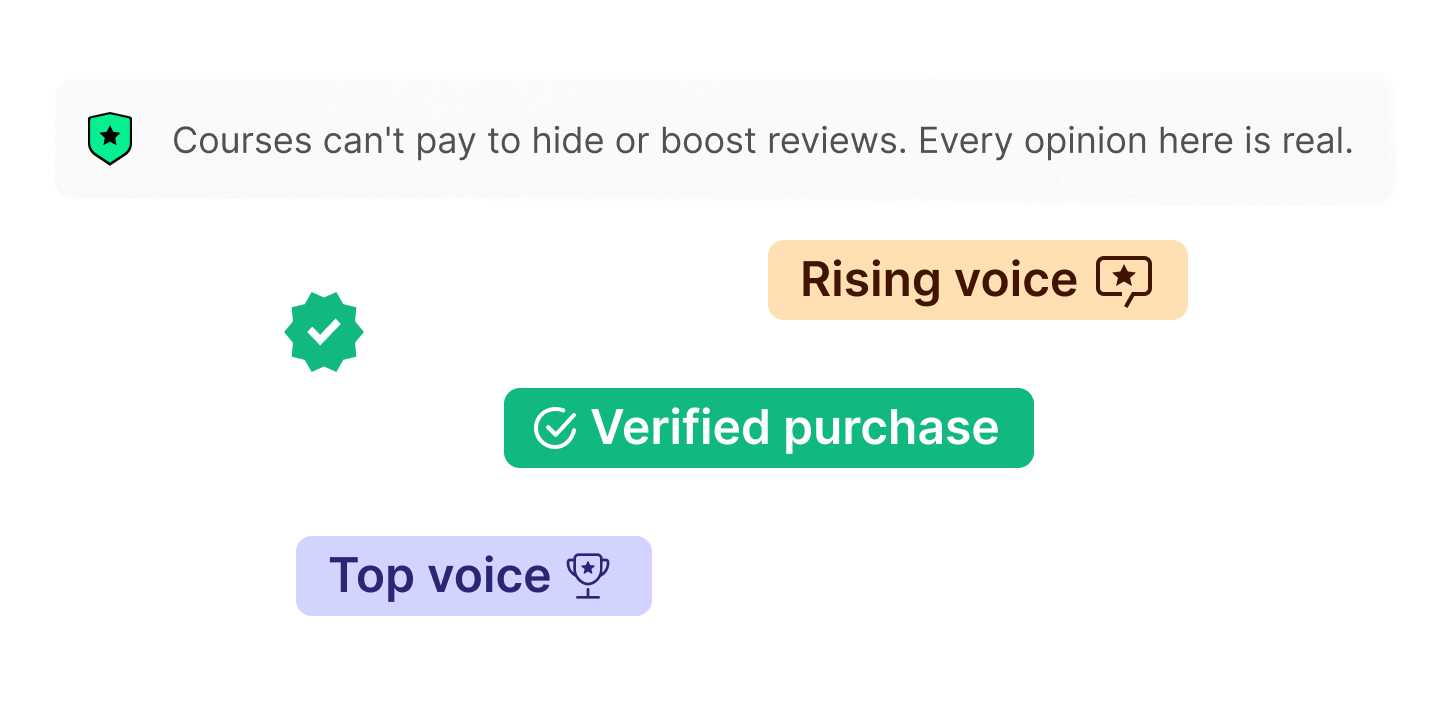

3. Trust Infrastructure

I implemented:

- Verified User badge

- Verified Purchase badge (proof attached)

- Transparent moderation policy

- Clear statement: we do not accept payment to remove reviews

- Badges are visually differentiated to avoid confusion and maintain credibility.

Impact (Early Validation)

Before building the full product, I tested the structured review format with potential users who had previously purchased online courses.

- 10+ users commented that they preferred structured questions over open text reviews.

- 80% said the “Worth the money” and “Would you recommend it?” questions helped them decide faster.

- 2 users suggested adding one more field to clarify course level mismatch.

- 7 users said they would actively use this type of structured review platform before buying a course.

Expected Product Impact

If scaled, the platform aims to:

⏱ -20%

Users will be able to decide right course faster.

💸 Chances will reduce to waste money on the wrong courses.

💡Instructors could improve their teaching based on reviews.

❌ Users would be evaluate credibility and identify misleading or fake “guru” courses or bad courses easily.

The idea started from my personal frustration. I wanted to evaluate a course by a well-known YouTube UX designer, but every review on the creator’s website was positive. I searched Google, Reddit, and Quora, but there was nothing clear. UX courses in Udemy, reviews existed, but they were long and generic, and didn’t answer my questions as I wanted to know if the content was still updated, whether the course was truly project-based or mostly theoretical, and if it would realistically deliver results.

I ended up spending hours digging through scattered threads to make a decision. A year later, I faced the same problem again That repetition motivated me so solve this problem….!

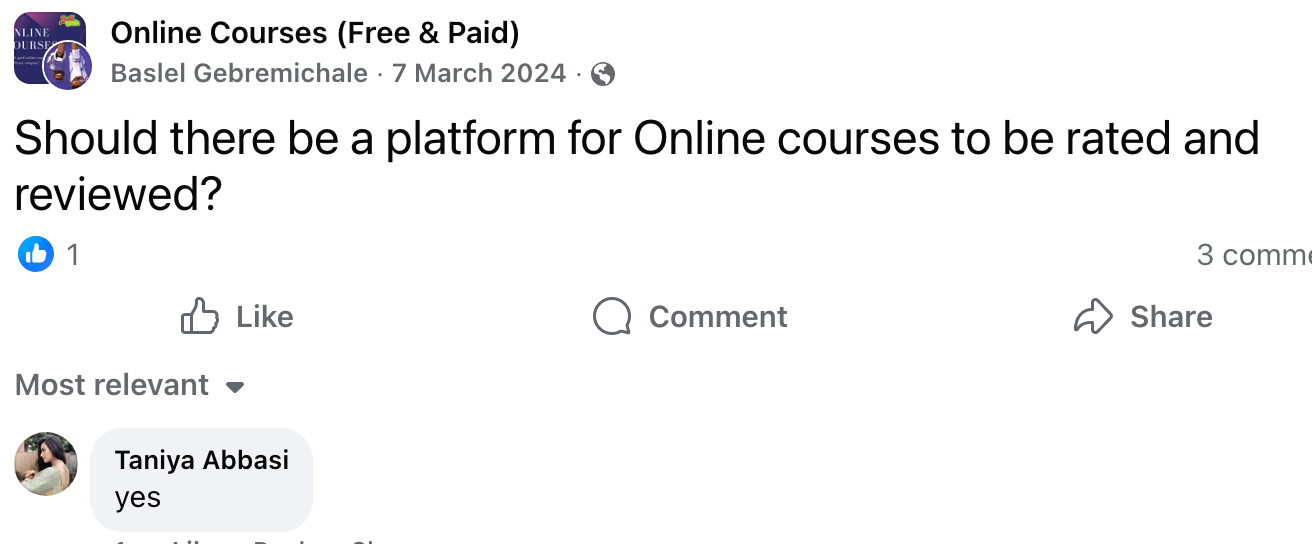

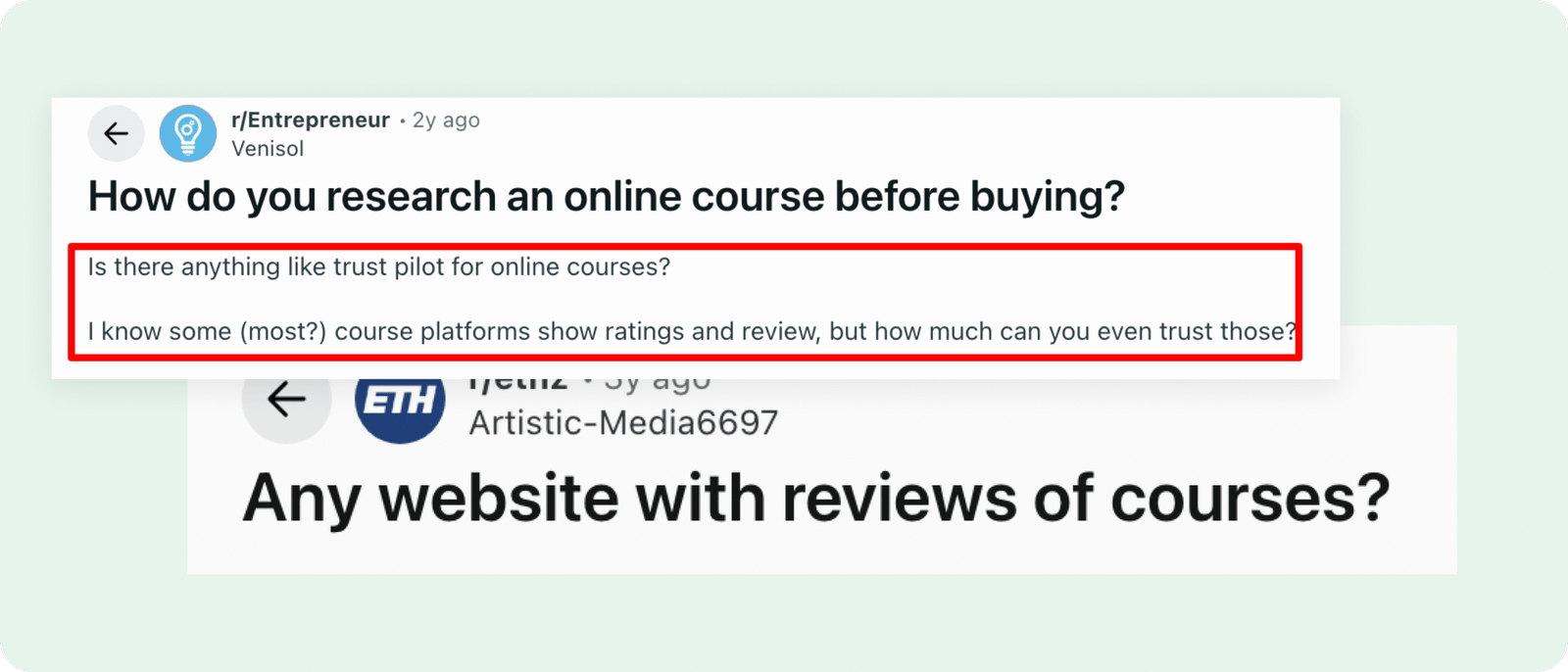

Research & Validation

What I did

- Analyzed multi-year Reddit and Quora threads related to course legitimacy

- Posted in relevant subreddits to test interest in a dedicated review platform

- Spoke with students from courses I had taken previously

- Used tools like "AnswerThePublic" to understand how people search around course legitimacy

- Studied review platforms like Trustpilot, Glassdoor, G2, and TripAdvisor to understand successful review mechanics

- Used AI tools (perplexity.ai, Grok.com) to synthesize recurring pain points across forums and communities

Key Insights

- Learners on Reddit repeatedly ask whether there is a centralized place to find reviews of the many online courses they see advertised, especially high‑ticket ‘guru’ programs.

- Learners consistently search “X course scam or legit”

- Existing review platforms mostly cover large marketplaces like Udemy, Coursera, or bootcamps. Expensive creator-led courses from platforms like YouTube, Maven, or Designlab are often not listed at all, which means users cannot see independent reviews for them.

- Current review sites focus on ratings and general comments, but they rarely capture outcome-based feedback such as real results, project work, career impact, or return on investment.

- Many forum users mentioned that before buying a course, they research multiple things separately — reviews, instructor background, student results, refund experience — often across different threads and forums. This process is time-consuming and inefficient.

- Across platforms, hundreds of users openly complain about scam-like or overhyped courses, but these complaints are scattered and hard to evaluate in one place.

Competitive Analysis

Before designing Spill the Course, I analyzed platforms across two categories:

1. Course aggregation platforms

2. Established review-driven platforms

| Platform | Primary Focus | Strengths | Limitations | How Spill the Course Addresses the Gap |

|---|---|---|---|---|

| classcentral.com | Course aggregation (universities + marketplaces) |

|

| Supports influencer, independent, and marketplace courses with structured, outcome-based reviews and visible decision signals |

| coursereport.com | Bootcamp reviews |

|

| Applies structured review model beyond bootcamps and introduces fast-scan outcome tags and recommendation percentages |

| switchup.org | Bootcamp comparison & ranking |

|

| Surfaces “Worth the Money” and “Would Recommend” percentages with structured outcomes for faster decision-making |

| spillthecourse.com | Independent course review platform (influencers + marketplaces + independent programs) | Structured review format, outcome-based tags, recommendation %, value signals, add-missing-course flow | Early-stage platform, relies on user contributions | Designed to reduce research time, centralize independent reviews, and make course evaluation clearer and faster |

Review Driven Platforms I Studied

G2:

- Studied how they structure reviews with clear categories

- Analyzed how they show recommendation percentages and rating breakdowns

Used this model to design outcome-based course reviews

Glassdoor:

- Observed how reviews focus on decision-making factors

- Studied how negative reviews stay visible to build trust

Applied a transparency-first approach to my platform

TripAdvisor:

- Studied how they handle “not found” searches without losing users

- Analyzed their “add new place” flow

Designed a low-friction “Add Course” option to prevent drop-off

Trustpilot:

- Researched how they position themselves as independent

- Studied how they communicate moderation and review policies

Applied clear messaging around authenticity and no paid review removal

Design Approach

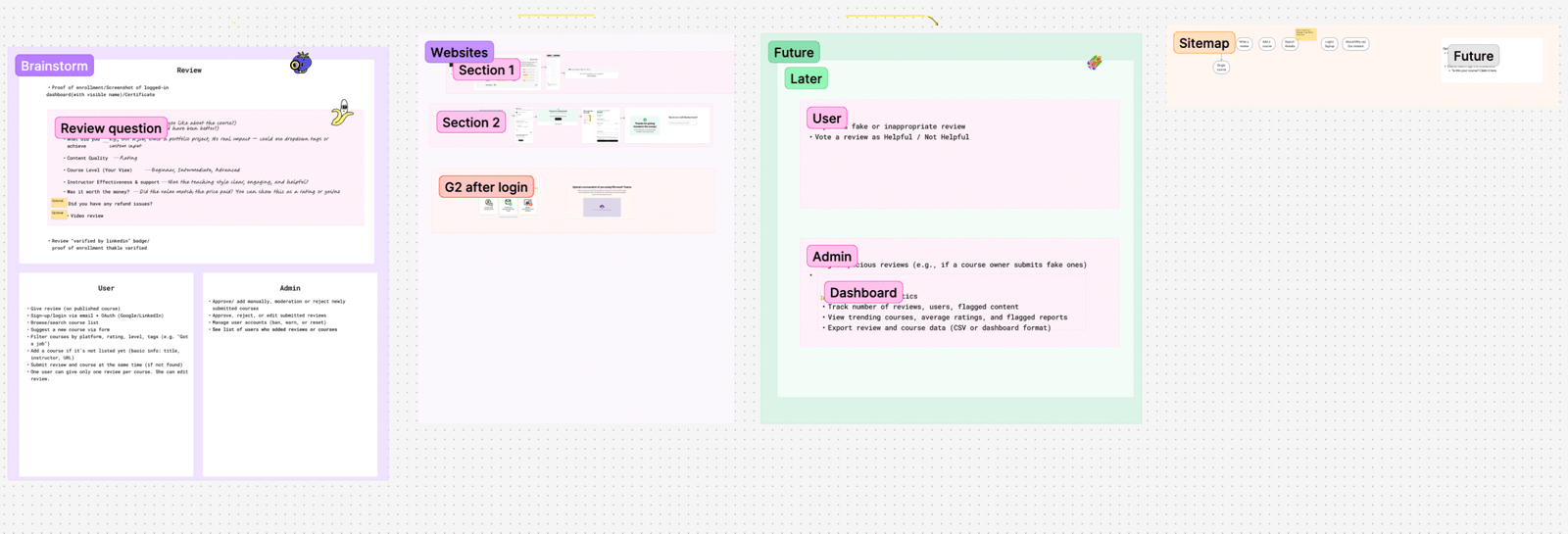

Figma brainstorm: Before designing anything, I opened Figjam and mapped the whole system.

- Mapped review questions

- Defined what a user can do & admin can do

- Prioritized important features of MVP

- Created sitemap

- Defined future user features and admin tools

- I studied how other platforms collect and display reviews, mapped their flows

AI exploration: Instead of starting with traditional low-fidelity wireframes, I used AI UI tools (such as Lovable and UX Pilot) to quickly explore layout directions and interaction patterns. This allowed me to test multiple concepts fast, then refine and systemize everything properly in Figma.

After validating the structure, I redesigned everything properly in Figma with autolayout and consistent components.

Key User Flows

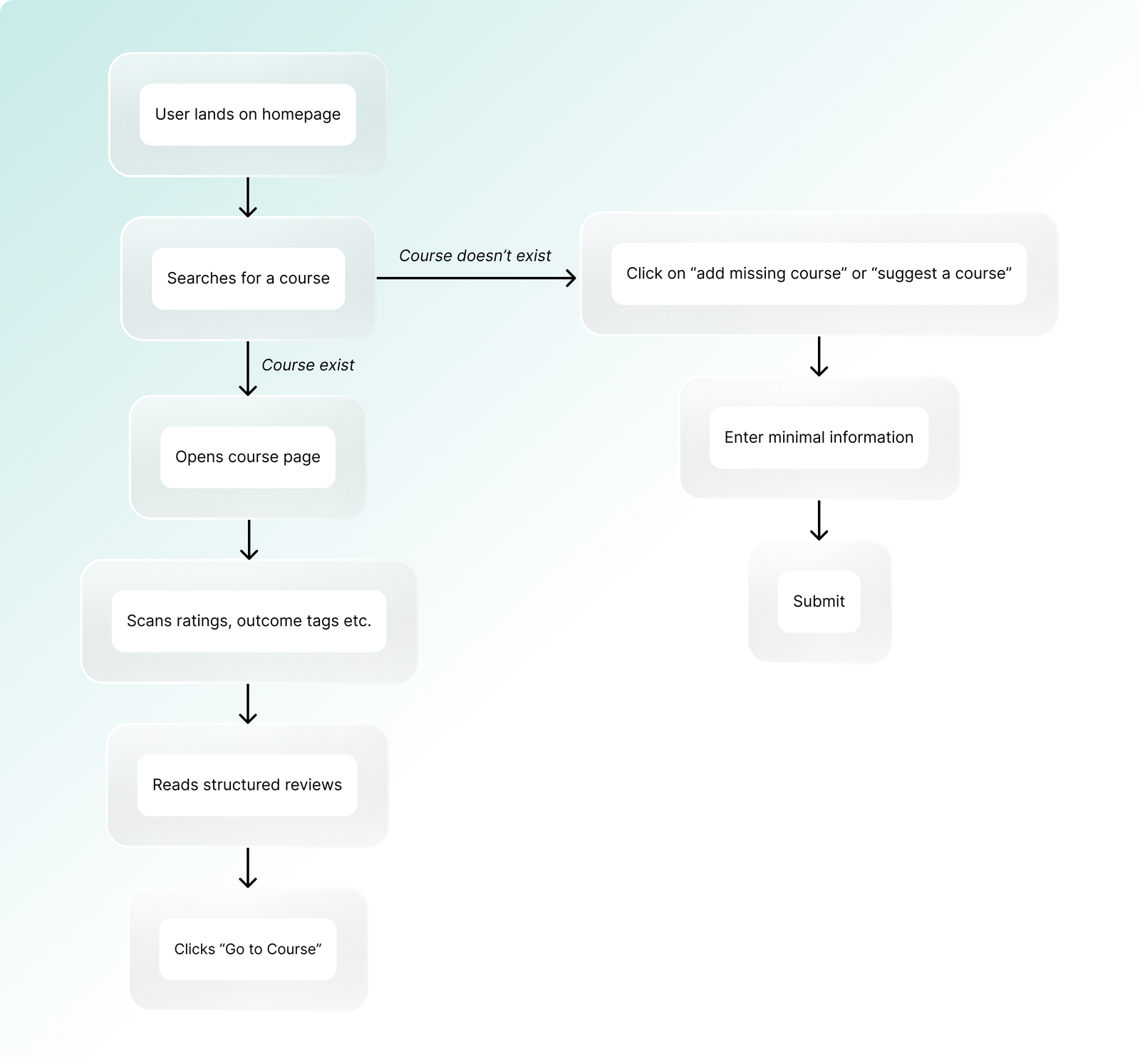

1. Finding and evaluating a course

UX Focus:

- Help users decide "if the course is worth it?" in minutes, not hours.

- If the course isn’t listed, give users a simple fallback option preventing drop-off while capturing real search demand data.

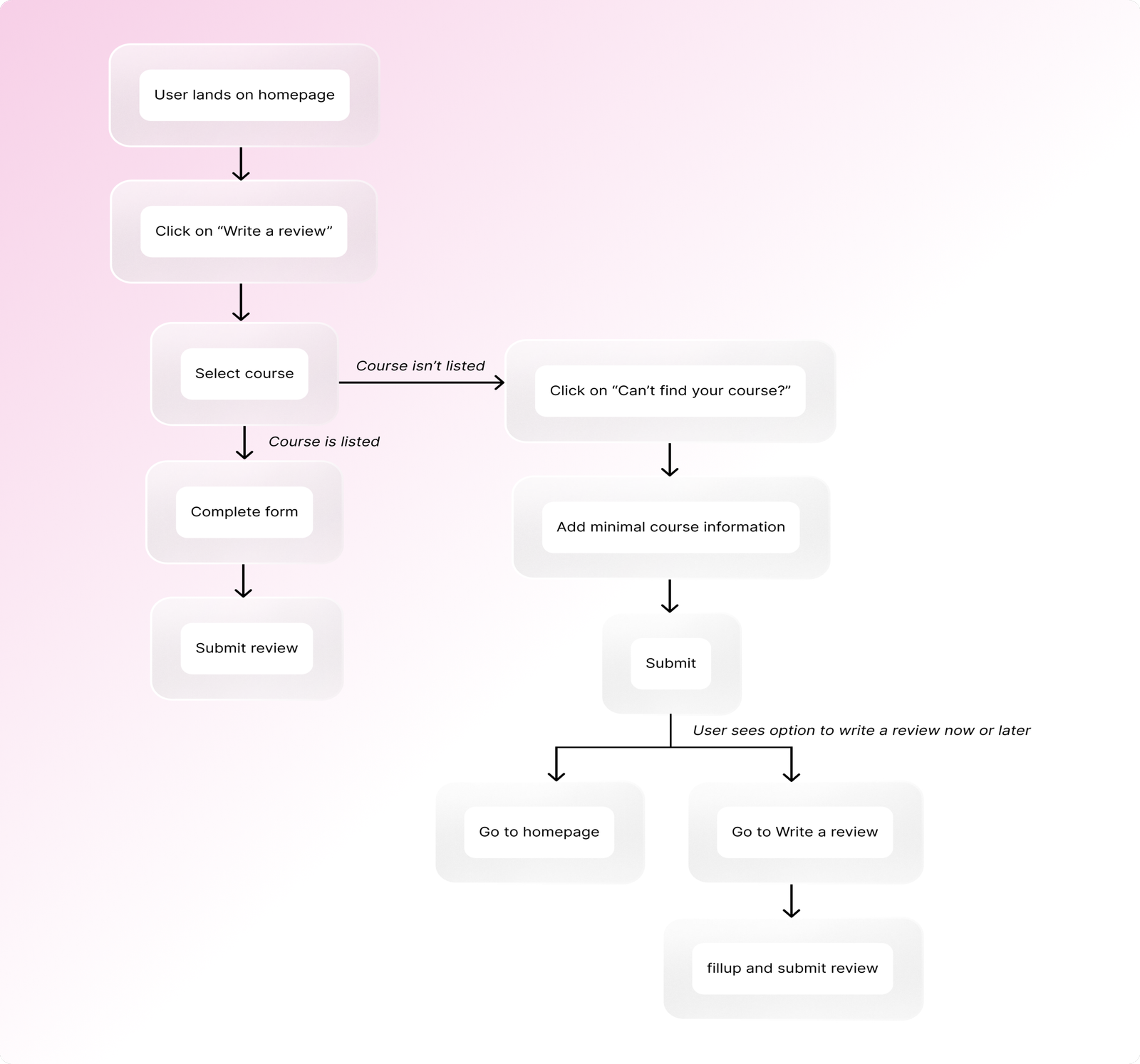

2. Writing a Review

UX Focus:

- Turn emotional opinions into structured, comparable insights.

- Users can add and review the course immediately on the same page, removing extra steps and keeping contribution momentum high.

3. Browse & Compare Courses

Goal: A student wants to explore options.

Flow:

- User browses category page

- Sees course cards with ratings, tags etc.

- Opens multiple courses in new tabs

- Compares structured signals

- Decides

UX Focus:

- Decision in seconds, not paragraphs.

4. Contribute & Build Credibility

Goal: A returning user wants recognition.

Flow:

- User logs in

- Submits second or fifth review

- Receives badge

- Sees badge next to name on reviews

- Decides

UX Focus:

- Encourage repeat contributions with simple gamification.

Testing & Iteration

- Conducted usability testing on core flows such as writing a review, adding a course, and viewing trust badges

- Performed edge-case testing around authentication methods (email signup, Google login, LinkedIn connection)

- Focused heavily on validation logic to enforce one review per user per course

- Faced duplicate prevention issues due to multiple login methods (email, Google, LinkedIn), which created account-linking and validation gaps

- Worked closely with the developer to refine backend logic, testing and retesting for several days across different account scenarios until one-review-per-user enforcement was consistent

What’s Next!

Spill The Course is live and fully functional, here are some of the features for next phase –

- Creator profiles where instructors can claim and manage their courses

- Helpful votes on reviews to surface the most trusted feedback

- Users will be able to report inappropriate or fake reviews

- More gamification elements to reward consistent, quality reviewers

- Trending and fast-growing courses based on activity signals

- Advanced admin tools including data export and review analytics

From a business perspective, the long-term model includes affiliate partnerships and premium visibility options, without compromising review integrity.

The goal is to evolve the platform into a scalable, trust-first ecosystem where students, reviewers, and instructors all benefit , while keeping transparency at the core.